Walmart.com is the second-largest US online retailer in 2026. Its ecommerce revenue exceeded $150 billion in fiscal year 2026, a 24% year-over-year increase. With 267 million product listings, manual price and catalog monitoring is impossible at any meaningful scale. This article ranks the 8 best Walmart scrapers in 2026. Rankings reflect benchmark success rate, data completeness, anti-bot capability, and pricing. Bright Data ranks #1 with a 98.44% success rate in Scrape.do’s independent benchmark across 11 providers.

In this article, we are going to talk about:

- What Walmart scrapers are and the main types available in 2026

- The 8 best Walmart scraping tools ranked by benchmark performance and pricing

- How to choose the right tool for your specific data requirements

- The technical challenges that make Walmart one of the hardest retail sites to scrape

- Why Bright Data ranks #1 with a 98.44% success rate in an independent benchmark across 11 providers

TL;DR: Best Walmart Scrapers at a Glance

| Tool | Type | Free Tier | Starting Price | Best For |

|---|---|---|---|---|

| Bright Data | Dedicated Walmart API + Datasets | Free trial, 1,000 requests | $0.75/1K requests + double funds up to $500 | Best Overall |

| Decodo | eCommerce Scraping API | 7-day trial, 1,000 results | $0.25/1K requests | Best Value |

| Oxylabs | Web Scraper API | 7-day trial, 5,000 results | $2/1K requests | Best Data Completeness |

| Zyte API | AI-Powered Scraping API | $5 in free credits | $1+ per request | Fastest Response Time |

| ScraperAPI | Dedicated Scraping API | 7-day trial, 5,000 credits | $49/month | Best Budget Option |

| SerpApi | Search Data API | 250 searches/month free | ~$50/month | Best for Search Data |

| Apify | Actor-Based Platform | Monthly compute credits | $49/month | Best Custom Workflows |

| Nimbleway | AI-Powered Scraping API | Trial available | $3/1K results | Best Geo-Targeting |

What Is a Walmart Scraper?

A Walmart scraper is an automated tool that extracts structured product data from Walmart.com at scale. It replaces manual collection with programmatic access to product information across the full catalog.

Scrapers target Walmart product pages, search results, category listings, and review sections. They return prices, availability, seller information, specifications, fulfillment options, and customer review data. Output is structured as JSON, CSV, or another analyst-ready format for downstream analysis and system ingestion.

Walmart’s 267 million product listings represent one of the most commercially valuable public data sources in US retail. Monitoring even a fraction of that catalog manually is not operationally viable. The scale demands automation.

Four types of Walmart scrapers exist in 2026. Dedicated Walmart scraper APIs include pre-built parsing logic specific to Walmart’s page structure. General-purpose scraping APIs work across any website including Walmart. Proxy-based custom scrapers let engineering teams build proprietary solutions backed by residential IP networks. Pre-collected Walmart datasets provide bulk product data without requiring any scraping infrastructure. The Walmart scraping tutorial covers complete Python code walkthroughs for common data collection patterns.

How We Evaluated These Walmart Scrapers

Selecting the right Walmart scraper requires testing against real production conditions. Walmart’s anti-bot stack makes it one of the most technically demanding retail sites in 2026.

Does the Tool Beat Walmart’s Anti-Bot Stack?

Walmart combines Akamai Bot Manager with HUMAN Security behavioral analysis and reCAPTCHA. Multiple independent scraping analysis sources rate Walmart at 9/10 difficulty in 2026. We evaluated each tool’s documented and benchmark-tested success rate against this combined defense layer.

How Many Fields Does It Extract per Product?

A scraper delivering 300 fields per product page serves different use cases than one delivering 650+. We compared field counts for product titles, prices, inventory, seller data, fulfillment, reviews, ratings, and schema markup. Field counts across tools reviewed ranged from below 300 to over 650 per product page.

How Fast Does It Respond to Requests?

Median response time determines whether a tool supports real-time monitoring or only batch workloads. We compared latency from request submission to structured output delivery. Benchmarked response times ranged from 2.31 seconds to 11.12 seconds across all tools reviewed.

What Does It Cost to Scrape Walmart at Scale?

We evaluated cost per 1,000 requests, pay-per-success versus pay-per-request billing models, and enterprise scalability. For a 9/10 difficulty target, the billing model has outsized cost impact at production volume.

The 8 Best Walmart Scrapers, Ranked

These eight tools represent the strongest options for Walmart data extraction in 2026. Rankings reflect benchmark performance, data completeness, pricing model, and production fit across real Walmart workloads.

1. Bright Data: Best Overall Walmart Scraper

Bright Data ranks #1 based on a 98.44% average success rate in an independent benchmark conducted by Scrape.do across 11 scraping providers. That is the highest result of any provider tested. The AIMultiple Walmart benchmark also ranked Bright Data #1, based on the best balance of field count and response time across 2,000 test requests on 200 Walmart product and search pages. The dedicated Walmart scraping endpoint is purpose-built for Walmart’s product structure, dynamic rendering requirements, and layered anti-bot defenses.

What distinguishes Bright Data from every other tool in this list is breadth. Bright Data is not a single scraping API. It is a full Walmart data platform covering four distinct product lines. These include a dedicated real-time scraper, a 267M-record pre-collected dataset, an MCP server for AI workflows, and a managed cloud browser for JavaScript-heavy pages.

Dedicated Walmart Scraper API

The Web Scraping API includes a Walmart endpoint. It covers product pages, search results, category listings, seller profiles, reviews, and inventory data. It outputs structured JSON without requiring any custom parsing code. Fields covered include product title, URL, SKU, GTIN identifiers, prices, and availability. They also include seller names, fulfillment options, specifications, image URLs, reviews, star ratings, and breadcrumb paths.

This endpoint runs on infrastructure maintaining 99.99% uptime across 437+ pre-built scrapers. The pay-per-success model charges $1.50 per 1,000 successful requests. If Walmart blocks a request, that attempt costs nothing. For a 9/10 difficulty target, this model dramatically reduces cost uncertainty versus pay-per-request alternatives.

Pre-Collected Walmart Datasets

For teams needing bulk historical data without scraping infrastructure, the pre-collected Walmart dataset contains 267 million product records. Records are available in CSV, JSON, XLSX, or ndJSON formats. Delivery options include AWS S3, Google Cloud Storage, and Azure Blob Storage. Pricing starts at $250 per 100,000 records.

This is the fastest path to large-scale Walmart data for teams focused on analysis rather than infrastructure. The dataset updates on a defined schedule and is available for on-demand refresh. AI training pipelines, pricing model development, and catalog benchmarking workflows are the primary use cases.

Walmart MCP Server

The Walmart MCP server enables real-time data extraction inside AI agent and large language model workflows. It connects LLM systems to live Walmart product data without requiring a separate API integration layer. No other provider reviewed here offers a purpose-built Walmart data connector for AI agent architectures.

For AI-powered pricing or catalog monitoring, the MCP server eliminates an entire integration layer. Data flows from Walmart directly into agent context without intermediate transformation steps.

Scraping Browser

Bright Data’s Scraping Browser handles JavaScript rendering, CAPTCHA solving, and fingerprint evasion automatically. It defeats Akamai Bot Manager, HUMAN Security, and PerimeterX without any client-side configuration. Walmart’s React-loaded product prices, inventory indicators, and fulfillment options are fully accessible through this approach.

No headless browser infrastructure is required on the client side. The browser runs at cloud scale with managed IP rotation included. For teams wanting browser-based reliability, this approach removes the overhead of maintaining Playwright or Puppeteer clusters.

Proxy Network and Walmart-Specific Proxies

The proxy network includes 400 million ethically sourced residential IPs across 195 countries. City-level and ASN-level targeting are both supported. The dedicated Walmart proxy network uses rotating IPs optimized for Walmart.com, bypassing datacenter ranges Akamai blocks.

Walmart serves different prices and inventory levels by US region. City-level IP targeting is commercially important for regional pricing intelligence and MAP compliance monitoring. It is not just an anti-bot measure. It is a data accuracy requirement for any team tracking regional Walmart pricing differences.

Pricing: Web Scraping API starting at $0.75 per 1,000 successful requests (pay-per-success). Walmart Datasets from $250 per 100,000 records. Residential Proxy Network from $2.5 per GB. A free trial is available for all products. Enterprise plans with dedicated support require a minimum monthly spend of $499.

Best for: Teams that need production-grade Walmart data at scale with maximum reliability, geo-targeting precision, and AI workflow integration.

Pros:

- ✅ 98.44% success rate in independent benchmark across 11 providers, the highest tested

- ✅ Pay-per-success pricing: zero cost for blocked or failed Walmart requests

- ✅ Dedicated Walmart endpoint covering products, reviews, inventory, and full seller data

- ✅ City-level geo-targeting for accurate regional pricing and inventory collection

- ✅ Pre-collected dataset with 267M Walmart product records for instant bulk access

- ✅ MCP Server for real-time Walmart data inside AI agent and LLM workflows

Cons:

- ❌ Premium pricing compared to basic scraping APIs for simple or low-volume use cases

- ❌ Full product suite (Datasets, Scraping Browser, Proxies) requires separate product subscriptions

- ❌ Priority support and enterprise features require a $499 minimum monthly spend

2. Decodo: Best Value for Walmart Data Extraction

Decodo delivered 650+ fields per product in the AIMultiple Walmart benchmark, the highest raw count tested. The Proxyway benchmark recorded a 99.98% success rate on Walmart. At $0.25 per 1,000 requests, Decodo is the most cost-efficient enterprise-grade tool reviewed.

Key features:

- eCommerce Scraping API purpose-built for Walmart and major retail sites

- 650+ fields per Walmart product request in AIMultiple benchmark testing

- 99.98% success rate on Walmart in Proxyway benchmark

- Credit-based model where simpler requests consume fewer credits

- Built-in structured JSON and CSV output without custom parsing logic

- Custom and scheduled scraping templates for recurring Walmart workflows

Pricing: Plans start at $0.50 for 2,000 requests ($0.25 per 1,000). Credit multipliers apply for complex bot-protected pages like Walmart. A 7-day free trial includes 1,000 results. A 14-day money-back guarantee is included. Scheduled tasks and custom templates require the Advanced subscription tier.

Best for: Teams that need maximum field coverage per dollar and can operate within country-level geo-targeting constraints.

Pros:

- ✅ 650+ fields per Walmart product page, the highest raw field count in benchmark testing

- ✅ 99.98% success rate on Walmart in Proxyway benchmark

- ✅ Lowest base price among enterprise-grade tools at $0.25 per 1,000 requests

Cons:

- ❌ Country-level geo-targeting only; no city or state-level targeting for regional Walmart pricing

- ❌ Scheduled tasks and custom templates require the Advanced subscription tier

- ❌ Subscription model required across all plan tiers; no pay-as-you-go option

3. Oxylabs: Best for Data Completeness

Oxylabs ranked #2 in AIMultiple’s Walmart benchmark with approximately 620 fields extracted per product page. The Proxyway benchmark recorded a 99.88% success rate and a 2.84-second median response time. Its integrated web crawler for automated Walmart category traversal suits large-scale catalog extraction.

Key features:

- Approximately 620 fields per Walmart product page in AIMultiple benchmark testing

- 99.88% success rate and 2.84-second median response time in Proxyway benchmark

- OxyPilot AI assistant auto-generates scraping requests and XPath/CSS parsing rules

- Integrated crawler for automated Walmart category and search result traversal

- Scraper API Playground for live code generation and real-time API testing

- Scheduled task management for recurring Walmart data collection at scale

Pricing: Plans start at $49 for 24,500 results ($2 per 1,000 requests). A 7-day free trial includes 5,000 results. Enterprise volume pricing is available. No pay-as-you-go option for one-off projects.

Best for: Teams that need deep structured field coverage across large Walmart catalog segments with AI-assisted parsing support.

Pros:

- ✅ 620+ fields per Walmart product page with AI-assisted parsing via OxyPilot

- ✅ 99.88% success rate in Proxyway benchmark with 2.84-second median response

- ✅ Integrated crawler for automated Walmart category and listing traversal

Cons:

- ❌ Highest per-request price among all tools reviewed at $2 per 1,000 requests

- ❌ Country-level geo-targeting only; no city or state-level targeting available

- ❌ No pay-as-you-go option for one-off or lower-volume Walmart scraping projects

4. Zyte API: Fastest Walmart Scraper

Zyte API recorded a 2.31-second median response time in the Proxyway Walmart benchmark, the fastest tested. Its dual integration modes (REST API and proxy server) allow adoption without changing existing infrastructure.

Key features:

- 2.31-second median response time, fastest in the Proxyway Walmart benchmark

- 96.22% success rate on Walmart product and search pages

- REST API and proxy server integration for flexible adoption into existing stacks

- Cloud-hosted IDE for writing and deploying custom interaction scripts

- Pay-as-you-go billing with an online cost calculator for project estimation

Pricing: Pay-as-you-go starting at $1 per simple request. JavaScript rendering and structured parsing are billed as separate additional line items. New users receive $5 in free credits. Custom enterprise pricing is available.

Best for: Teams where response latency is the primary constraint and a 96%+ Walmart success rate meets their workload requirements.

Pros:

- ✅ 2.31-second median response time, the fastest of all tools reviewed

- ✅ Dual integration modes minimize migration effort for existing scraping infrastructure

- ✅ Pay-as-you-go billing fits variable Walmart scraping workload patterns

Cons:

- ❌ 96.22% success rate is the lowest among enterprise-grade tools reviewed for Walmart

- ❌ Lowest field extraction count for Walmart product pages among all benchmarked tools

- ❌ JavaScript rendering and structured parsing add cost beyond the base request price

5. ScraperAPI: Best Budget Walmart Scraper

ScraperAPI matched the top success rate on Walmart at 99.98% in the Proxyway benchmark. ScraperAPI’s endpoints cover Walmart search, product pages, categories, and reviews at a predictable monthly cost.

Key features:

- 99.98% success rate on Walmart in the Proxyway benchmark

- Dedicated Walmart endpoints: search results, product pages, category listings, and reviews

- Structured JSON and CSV output via Webhook or file download

- Four integration modes: proxy server, SDK, open connection, and async processing

- 7-day free trial with 5,000 credits included at no cost

Pricing: Plans start at $49 per month for 100,000 API credits. Walmart’s bot-protection layer applies credit multipliers that reduce effective request volume per plan. Country-level geo-targeting is restricted to the highest-priced plan tier.

Best for: Budget-conscious teams that need dedicated Walmart endpoint coverage at a predictable monthly rate.

Pros:

- ✅ 99.98% success rate on Walmart matching the top performers in benchmark testing

- ✅ Dedicated Walmart endpoints for search, product pages, categories, and reviews

- ✅ Four integration modes including proxy server for existing scraping setups

Cons:

- ❌ 5.04-second median response time is among the slowest of all tools reviewed

- ❌ Country-level geo-targeting restricted to the highest plan tier

- ❌ Credit multipliers for Walmart’s bot-protection reduce effective volume significantly per plan

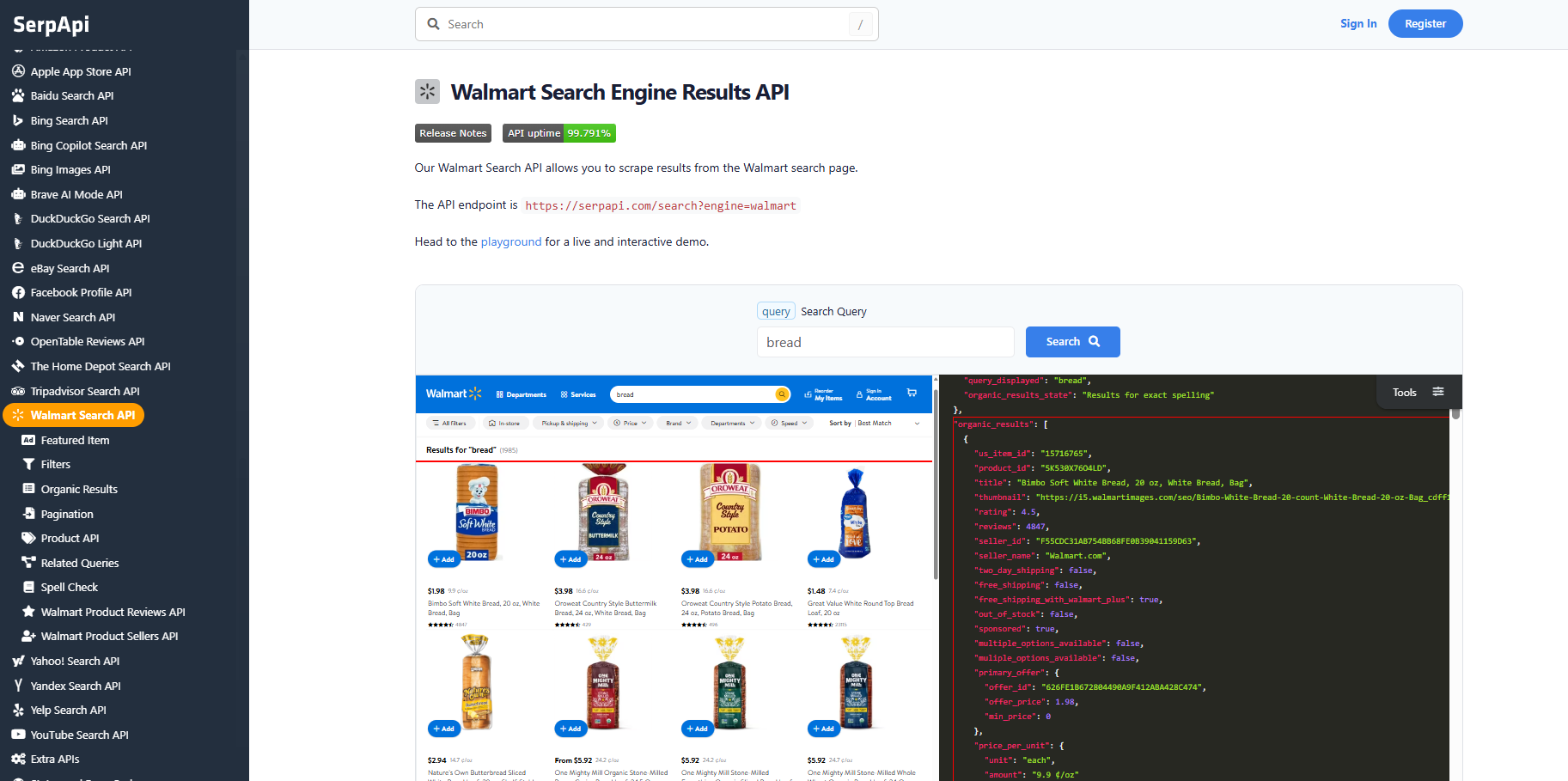

6. SerpApi: Best for Walmart Search Data

SerpApi’s dedicated Walmart Search API returns structured JSON for search results and individual product pages. It extracts product IDs, titles, prices, thumbnails, ratings, review counts, seller info, and shipping indicators. Its 250-search-per-month free tier requires no credit card and is the lowest-friction entry for Walmart search.

Key features:

- Dedicated Walmart Search API with structured JSON output

- Extracts product IDs, titles, prices, thumbnails, ratings, review counts, and seller info

- Supports organic search results, featured items, filter data, and product pages

- 250 free searches per month with no credit card required

Pricing: Free tier includes 250 searches per month. Paid plans start at approximately $50 per month for 5,000 searches. Consumption-based enterprise pricing is available for high-volume needs.

Best for: Teams focused on Walmart search result intelligence, SERP monitoring, and keyword-level product visibility tracking.

Pros:

- ✅ 250 free searches per month with no credit card required

- ✅ Highly structured JSON for search result and individual product page data

- ✅ Minimal integration overhead for search-focused Walmart workflows

Cons:

- ❌ Not built for bulk catalog extraction, inventory monitoring, or deep review mining

- ❌ Does not support seller depth analysis, category crawling, or MAP compliance workflows

- ❌ Higher cost per request than general-purpose APIs when scaled to tens of thousands of requests

7. Apify: Best for Custom Walmart Workflows

Apify’s Walmart Scraper Actor covers products, prices, reviews, and inventory with a documented 95%+ success rate. Its open SDK lets teams extend scraping logic for non-standard data requirements beyond the default actor.

Key features:

- Walmart Scraper Actor covering products, prices, reviews, and inventory

- 95%+ success rate on Walmart product and search pages per Apify’s published metrics

- Open Apify SDK enables custom scraping logic and actor extension

- Free tier includes monthly platform compute credits

- Native scheduling, webhook callbacks, and multiple output format support

Pricing: Free tier includes monthly compute credits. Paid plans start at $49 per month. The Walmart Scraper Actor is billed by compute units consumed per run with no long-term commitment required.

Best for: Engineering teams that need customizable Walmart scraping workflows with scheduling and webhook integration.

Pros:

- ✅ Open SDK enables custom logic for non-standard Walmart data collection requirements

- ✅ Native scheduling and webhook callbacks for automated pipeline integration

- ✅ No long-term commitment required under the compute-unit billing model

Cons:

- ❌ 95%+ success rate is lower than dedicated Walmart API providers at production scale

- ❌ Higher per-record compute cost than dedicated APIs for large-volume Walmart workloads

- ❌ Customizing actors beyond defaults requires Apify SDK knowledge and development time

8. Nimbleway: Best Geo-Targeted Walmart Scraper

Nimbleway achieved 99.98% success on Walmart in the Proxyway benchmark and provides city- and state-level geo-targeting. That combination suits teams with regional Walmart pricing needs who skip Bright Data’s full suite.

Key features:

- 99.98% success rate on Walmart in the Proxyway benchmark

- City- and state-level geo-targeting for regional Walmart pricing and inventory data

- AI-powered behavioral mimicry for Walmart’s combined anti-bot defenses

- Batch processing of up to 1,000 Walmart URLs simultaneously

- Built-in structured JSON output parser with no custom configuration required

Pricing: Starts at $3 per 1,000 results. Pay-as-you-go and subscription models are both available. Custom JavaScript execution and header control require higher plan tiers. A free trial is available.

Best for: Teams with city-level geo-targeting requirements for regional Walmart pricing and inventory intelligence.

Pros:

- ✅ 99.98% success rate on Walmart matching the top performers in Proxyway benchmark

- ✅ City- and state-level geo-targeting for accurate regional Walmart data collection

- ✅ Batch processing of up to 1,000 Walmart URLs per simultaneous job

Cons:

- ❌ 11.12-second median response time is the slowest of all tools reviewed

- ❌ Unlimited concurrent requests restricted to the two most expensive plan tiers

- ❌ Higher entry price than ScraperAPI and Apify for basic Walmart scraping workloads

Side-by-Side Comparison Table

The table below summarizes all 8 Walmart scrapers reviewed, including benchmark reliability data for direct comparison.

| Tool | Best For | Starting Price | Free Trial |

|---|---|---|---|

| Bright Data | Best Overall | $0.75/1K requests + double funds up to $500 | 7-day business trial |

| Decodo | Best Value | $0.25/1K requests | 7-day trial, 1,000 results |

| Oxylabs | Best Data Completeness | $2/1K requests | 7-day trial, 5,000 results |

| Zyte API | Fastest Response Time | $1+ per request | $5 in free credits |

| ScraperAPI | Best Budget Option | $49/month | 7-day trial, 5,000 credits |

| SerpApi | Best for Search Data | ~$50/month | 250 searches/month free |

| Apify | Best Custom Workflows | $49/month | Monthly compute credits |

| Nimbleway | Best Geo-Targeting | $3/1K results | Trial available |

How to Choose the Right Walmart Scraper

Four factors determine the right Walmart scraper: data freshness, anti-bot capability, geo-targeting precision, and team technical level. Each factor can eliminate categories of tools immediately.

Which Data Freshness Level Do You Need?

Real-time price monitoring requires a scraping API delivering structured output within seconds. Batch analysis of historical pricing and catalog changes works equally well with pre-collected bulk data. Bright Data’s pre-collected Walmart dataset contains 267 million records updated on a defined schedule. It activates faster than any API-based pipeline. It costs less when daily or weekly freshness is sufficient over hourly polling.

Can the Tool Beat Walmart’s Defenses at Scale?

Walmart rates 9/10 in scraping difficulty. Enterprise tools including Bright Data, Oxylabs, and Decodo defeat Akamai Bot Manager and HUMAN Security automatically. Budget tools may require supplementary residential proxy infrastructure to maintain acceptable success rates at volume. A 96% success rate versus 99.98% means 20 times more failed requests per 100,000 attempts. At enterprise scale, this difference becomes a material cost and reliability gap that compounds over time.

Do You Need City-Level Geo-Targeting?

Walmart serves different prices and inventory levels by US region. Country-level geo-targeting is not sufficient for accurate regional pricing data collection. Bright Data and Nimbleway both support city-level and state-level targeting. Decodo and Oxylabs offer country-level targeting only. For regional MAP compliance or local price comparisons, city-level precision eliminates several tools from consideration immediately.

How Technical Is Your Team?

Non-developers can configure Walmart collection using Bright Data’s no-code Web Scraper IDE. It supports point-and-click field selection and scheduled CSV delivery. Teams needing data without scraping infrastructure can use the Walmart MCP server or download pre-collected datasets directly. Engineering teams can integrate via proxy server mode or REST API, available across all tools reviewed. The choice of integration mode should match current infrastructure, not require rebuilding it.

Common Use Cases for Walmart Data

Walmart scraping serves five primary commercial use cases in 2026, spanning competitive intelligence, brand protection, catalog analysis, and AI model development.

Competitive Price Monitoring

81% of US retailers use automated price scraping for dynamic repricing, up from 34% in 2020. Walmart’s Rollback pricing, Flash Picks, and intra-day promotional formats change frequently in high-velocity categories. Consumer electronics and gaming hardware prices can shift multiple times per day. Retailers monitor these changes and adjust their own pricing in near real-time. The Walmart price tracker gives teams a structured monitoring solution without managing scraping infrastructure.

MAP Compliance Monitoring

Brands with MAP policies need to identify unauthorized Walmart Marketplace sellers who undercut agreed price floors. Manual monitoring of a large SKU catalog is not viable at scale. Automated scraping of seller names, listing prices, and product details is the only scalable approach. Bright Data and ScraperAPI return structured seller names, ratings, and prices in a single Walmart API call. This enables daily MAP compliance sweeps across thousands of SKUs.

Product Catalog Intelligence

Retailers use Walmart data to identify new SKU launches, discontinued products, category repositioning, and assortment gaps. Tracking Walmart’s catalog alongside Amazon’s provides near-complete coverage of US online retail assortment changes. For teams monitoring multiple platforms, the best Amazon scrapers covers equivalent tools for Amazon data collection. Combined, Walmart and Amazon catalog data powers assortment gap analysis across the two largest US online retailers.

Review Mining and Sentiment Analysis

Popular Walmart products accumulate thousands of customer reviews. Aggregating reviews at scale lets brands track satisfaction trends, identify complaints, and spot quality signals early. Full-featured tools return review text, star ratings, reviewer metadata, and date stamps in structured output. Sentiment analysis pipelines and LLM classifiers run directly on this structured review data without additional transformation.

AI and LLM Training Data

The web scraping market is valued at $1.17 billion in 2026. It is forecast to reach $2.23 billion by 2031 at a 13.78% CAGR. AI training data demand is one of the primary growth drivers. Walmart product records, pricing histories, and review text feed pricing models, demand forecasting systems, and LLMs. Bright Data serves 75% of AI training data traffic across its infrastructure. Pre-collected Walmart datasets are the fastest activation path for large-scale training pipelines. The real-time API suits systems requiring continuously refreshed data for ongoing model fine-tuning.

Key Technical Challenges When Scraping Walmart

Walmart is rated 9/10 in scraping difficulty by multiple independent sources. Four technical challenges define what separates production-grade tools from solutions that fail under real conditions.

Why Is Walmart So Hard to Scrape?

Walmart deploys three overlapping defense layers simultaneously. Akamai Bot Manager analyzes device fingerprints, TLS signatures, and JavaScript execution behavior at the network edge. HUMAN Security performs behavioral analysis to detect non-human request patterns across sessions and across IP addresses. reCAPTCHA adds a friction layer for sessions flagged by either upstream system. Basic Python requests and simple headless browsers are blocked almost immediately. Only purpose-built tools combining behavioral mimicry, managed browsers, and premium residential proxies defeat all three defense layers.

Why Does JavaScript Rendering Matter for Walmart?

Walmart builds its product pages with React. Prices, inventory status, sponsored listings, and fulfillment options all load dynamically after initial page load. A static HTML scraper retrieves only the initial page shell. It misses the majority of commercially useful structured data. Headless browser rendering is a non-negotiable requirement for complete Walmart product data extraction. A managed Scraping Browser handles rendering, fingerprint evasion, and CAPTCHA solving in a cloud environment. It removes all headless browser infrastructure management from the client side.

Which Proxy Type Works for Walmart?

Akamai’s bot detection identifies and blocks datacenter IP ranges with high accuracy. Residential proxies from real ISP-assigned IPs are significantly harder to detect and block at production scale. Mordor Intelligence reports Akamai can block 82.3% of automated traffic on select Walmart product pages. This drives demand for premium residential proxy solutions. Networks with 400M+ IPs across 195 countries provide sufficient pool size to sustain high success rates. City-level IP targeting adds commercial value beyond anti-bot evasion by enabling region-specific Walmart pricing collection.

How Do You Parse Walmart’s Layered Data Structure?

Walmart product data lives in three sources: JSON-LD schema, React state, and dynamically rendered DOM elements. A scraper reading only one source produces incomplete records with key fields missing. Purpose-built Walmart scrapers reconcile all three sources into a unified structured record using layered parsing logic. This approach produces the 600+ field counts seen in benchmark testing. Generic HTML parsers cannot replicate this field coverage reliably at production scale.

Ready to collect Walmart product data at production scale? Start a free trial of Bright Data and access the most reliable Walmart scraping infrastructure available.

Frequently Asked Questions

Q: What makes Walmart so hard to scrape in 2026?

Walmart deploys a multi-layer anti-bot stack: Akamai Bot Manager handles device fingerprinting and JavaScript execution challenges at the network layer, HUMAN Security (formerly PerimeterX) performs behavioral analysis to detect non-human patterns, and reCAPTCHA adds an additional friction layer. Multiple independent scraping analysis sources rate Walmart at 9/10 difficulty in 2026. Basic Python requests and simple headless browsers are blocked almost immediately. Production-grade tools like Bright Data’s Web Scraping API and Scraping Browser are built specifically to defeat these systems automatically.

Q: Do I need residential proxies to scrape Walmart at scale?

Yes. Walmart’s Akamai Bot Manager aggressively identifies and blocks datacenter IP ranges, which are trivially identifiable as non-residential traffic. Residential proxies sourced from real ISP-assigned IPs are significantly harder to detect and block. Bright Data’s network of 400M+ residential IPs across 195 countries, with city-level targeting, is particularly well-suited for Walmart because Walmart serves different prices and inventory by US region, making city-level targeting commercially important beyond just anti-bot evasion.

Q: What data fields can I extract from Walmart product pages?

A full-featured Walmart scraper can extract: product title, URL, SKU and GTIN identifiers, current and original price, currency, availability and inventory status, seller name and rating, fulfillment options (pickup, delivery, shipping), product specifications and attribute table, image URLs, top customer reviews, aggregate star rating, review count, breadcrumb category path, and sponsored listing indicators. Tools like Decodo extract 650+ distinct fields per product page by combining DOM parsing with embedded JSON-LD and React application state extraction.

Q: What is the difference between a Walmart scraper API and a Walmart dataset?

A Walmart scraper API extracts data on demand in real time: you send a URL or product keyword and receive structured data within seconds. It is the right choice for price monitoring, inventory alerts, and any workflow requiring fresh data on a defined schedule. A Walmart dataset (such as Bright Data’s 267M-record collection at /products/datasets/walmart) is pre-collected, regularly refreshed bulk data available for immediate download in CSV, JSON, or other formats. Datasets are faster to activate, require no scraping infrastructure, and are better suited for large-scale historical analysis, AI model training, or catalog benchmarking.

Q: How often should I scrape Walmart prices to stay competitive?

For most product categories, daily scraping is sufficient to support competitive repricing decisions. For high-velocity categories like consumer electronics, gaming hardware, and daily deals, scraping every 4 to 6 hours captures intra-day changes more reliably. Walmart’s promotional pricing formats (Rollbacks, Clearance, Flash Picks) can change within hours, so scraping cadence should match your repricing response speed. Real-time streaming is technically possible but creates disproportionate infrastructure cost relative to the marginal freshness gain for most use cases.

Q: Can I scrape Walmart without writing any code?

Yes. Bright Data offers a no-code Web Scraper IDE where you configure the target URL, select fields from a point-and-click interface, and schedule CSV or JSON delivery without writing a single line of code. Bright Data’s pre-collected Walmart Datasets at /products/datasets/walmart require no scraping at all: the data is already collected, structured, and ready to download or query via API. Apify’s Walmart Scraper Actor also supports non-developer use through its web-based actor configuration interface.

Q: How does pay-per-success pricing work for Walmart scraping?

Pay-per-success means you are billed only when the scraper returns a valid, complete result. If a Walmart request is blocked by anti-bot defenses or returns an error page, that attempt incurs zero cost. Bright Data’s Web Scraping API uses pay-per-success pricing at $1.50 per 1,000 successful requests. For a high-difficulty target like Walmart, this model significantly reduces cost uncertainty compared to pay-per-request pricing where you are billed regardless of whether the scraper succeeded or was blocked.